How GenAI Fraudsters Fly Under the Radar (and How to Ground Them Before it’s Too Late)

We’re seeing it nearly every day now, with stories of both large-scale attacks and hyper-targeted social engineering schemes, genAI-powered fraud is dominating headlines and striking fear into both businesses and consumers.

As a fraud tool, genAI is evolving quickly and becoming more and more accessible. Fraudsters love it because it blends the best of both attack worlds, they can now combine the power of a brute-force ambush with the sophistication of their most advanced, bespoke attacks.

Today’s superpowered fraud bots are exceptionally good at mimicking human behavior and circumventing the device and network intelligence checks that would’ve revealed their predecessors. For businesses who experience high volume on a day-to-day basis, these attacks are particularly dangerous; modern bots can slip through traditional detection tools, resulting in a swath of AI-powered fraudulent applicants entering a business’s ecosystem without causing a significant ripple in its total application volume.

Let’s look at one attack as a representative example. In this instance, we saw first-hand how fraudsters target fast-growing digital businesses and how easily they can slip through the cracks.

Fraudsters Hiding in the Shadows: A Real-World Example of Evolving Tactics

Merchant onboarding companies are face-to-face with fraud every day. Prioritizing easy onboarding over fraud prevention, some expect up to 15% of applications to be fraudulent as a baseline, and the nature of their business means a single attack can result in millions in losses.

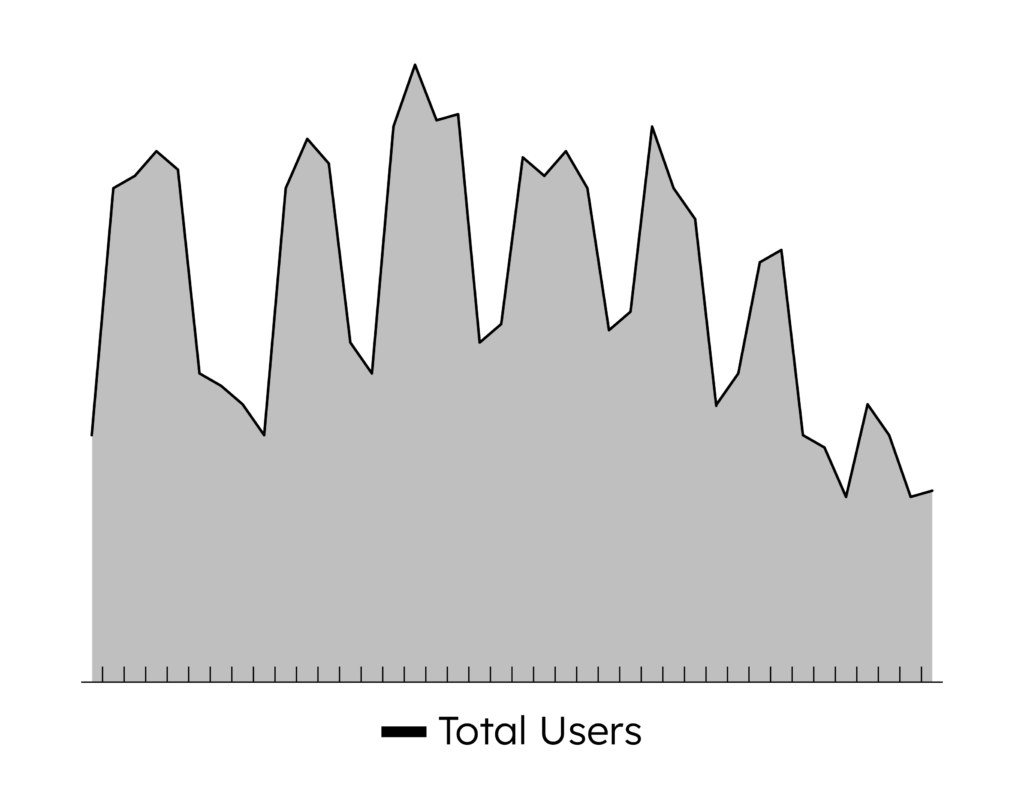

One merchant onboarding fintech grew accustomed to massive overall application volume and, in turn, a steady stream of fraudulent applicants. While fraud typically remained stable, their overall volume followed a peak-and-valley pattern as users applied during business hours. As the fintech loosened their decisioning process in order to onboard customers faster, the surges in volume only grew larger.

One week, the fintech experienced record-high application numbers. On the surface, it was business as usual: the overall application volume appeared to be following its usual pattern, in line with day-to-day trends. If the fintech only looked for abnormalities in its total volume, nothing would seem out of the ordinary; in fact, it would be a welcomed sign of growth and may even have encouraged them to further relax their defenses.

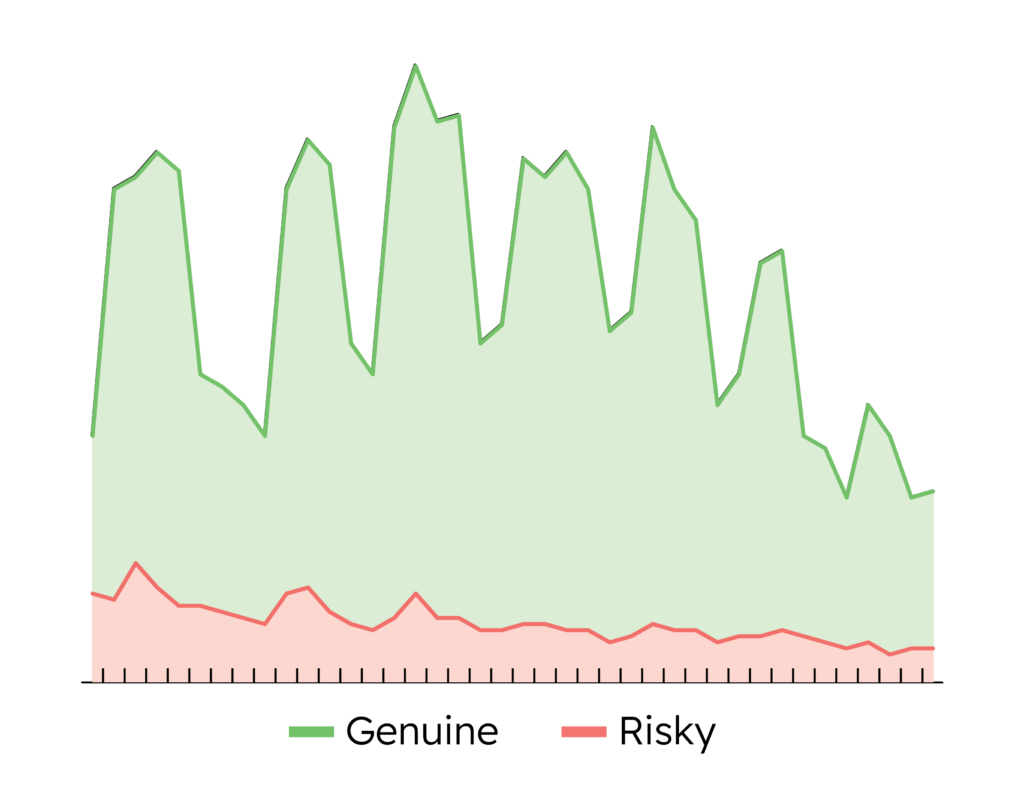

However, the NeuroID dashboard revealed a fraud ring lurking under the waves of applicants. With crowd-level visibility into user behavior, the fintech was able to spot a spike in their typically-consistent fraud volume occurring during an application surge. It wasn’t a massive spike, just a ratio jump of 13 percentage points, but this coordinated fraud ring could’ve had multi-million dollar implications if it went undetected. Using NeuroID, the fintech found the vulnerabilities in their onboarding process that fraudsters were exploiting and closed them without adding any additional friction for their genuine customers.

Modern Fraud Attacks Need Modern Solutions

Was this a genAI fraud attack? There’s no way to truly tell. But genAI fraud attacks are coming from all angles, tools like FraudGPT are widely available, and the increasing accessibility of sophisticated fraud tools is a big reason why intelligent bot attacks nearly quadrupled in the first half of 2023.

Today’s bots are more advanced than ever; if you’re only stopping them by looking for lightning-fast data entry or tell-tale device and network red flags, it’s time to revamp your approach. Even if your tried-and-true fraud stack is holding its own today, it’s only a matter of time until fraudsters evolve to beat it.

Stopping today’s attacks requires a proactive, adaptive, and scalable solution. NeuroID’s next-generation, end-to-end fraud protection checks all of these boxes. If you’re looking for a modern defense against today’s fraud threats, set up a call with one of our experts.